Do the Digits of Pi Actually Contain All of Shakespeare

If pi is a “normal” number, the constant would contain much more than Shakespeare, resolving why such a random-looking number lives at the heart of simple circles

published : 24 March 2024

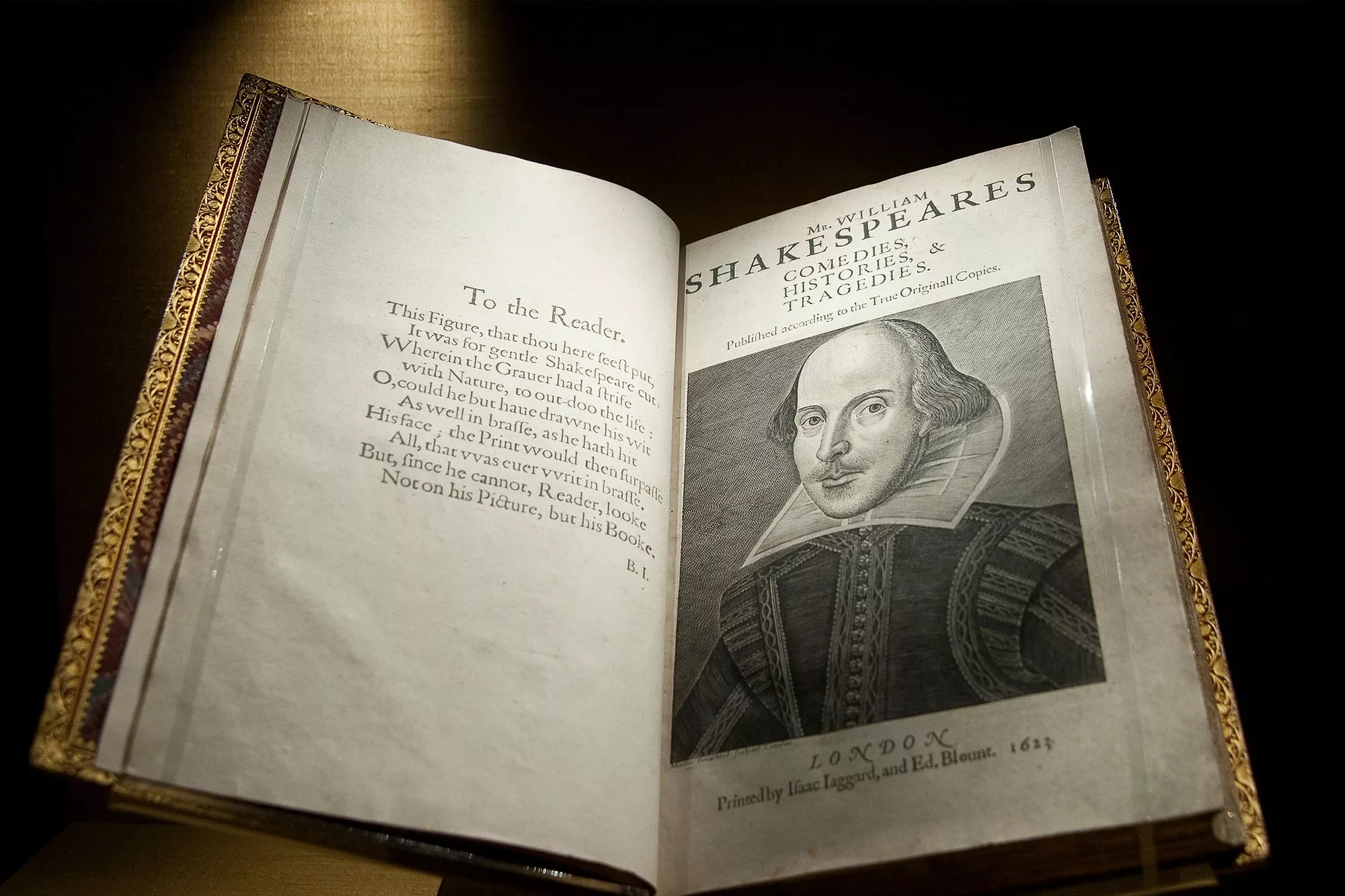

All circles, from onion rings to Saturn’s rings, share a magnificent property: their circumferences stretch about three times longer than their diameters. To be more precise (though still not exact), the circumferences are 3.14159, or pi, times longer. Circles are such fundamental shapes that pi, the number that governs them, stamps its signature across the mathematical world. Pi is an irrational number, meaning its decimal expansion never terminates (like one fourth does: 0.25) nor repeats (like one third does: 0.33333…). For such a perfectly symmetric—once considered divine—shape, it might seem surprising that circles obey such a disordered, abnormal number like pi. Why didn’t the universe pick a normal ratio like 3 for its simplest shape, much to piphilologists’ chagrin? Actually mathematicians believe that pi is normal—both in a colloquial and a technical sense. As we’ll see, normal numbers are simultaneously bizarre and mundane. If pi is normal it would imply that the constant contains much more than just Shakespeare, and it may also resolve the mystery of why such a random-looking number lives at the heart of simple circles. Normal numbers have decimal representations that contain every digit from 0 to 9 equally often and contain every two-digit sequence from 00 to 99 equally often. That’s true as well for three-digit sequences and beyond. In the long run the decimal expansion shows no favoritism toward any particular digits or pattern of them. Intuitively, if you pick a digit at random from a normal number, there should be a one-in-10 chance that it’s a 7 and a one-in-10 chance that it’s a 0. If you pick two consecutive digits, there should be a one-in-100 chance that it’s 63 or any other two-digit sequence. To understand just how bizarre normal numbers are, let’s imagine the digits of a normal number encoding text: 01 stands for “a,” 02 stands for “b,” and so on, designating unique two-digit numbers to every letter and punctuation mark. A consecutive string of numbers may encode a word, for example 160905 translates to “pie.” With this setup, normal numbers contain every possible text that has been written or could be written. Somewhere in the far reaches of the infinite decimal you’ll find all of Beyonce’s lyrics, an exact copy of this article, a detailed description of what will happen to you tomorrow, every conversation you’ve ever had verbatim, and yes, every work of Shakespeare. You’ll also find every variant of these texts, including Hamlet where Claudius speaks only in pig Latin and infinitely many false narratives about what will happen to you tomorrow, all amidst vast stretches of garbled nonsense. The longer the text, the farther out past the decimal point you’ll likely have to search. The word “bard,” encoded as 02011804 in our scheme, first appears over 82 million digits into pi. You would have to plunge unimaginable depths before chancing upon a sonnet, let alone an entire play. But infinity doesn’t run out of space. Just because a decimal is infinite and nonrepeating (i.e., irrational) does not mean that number would necessarily be categorized as normal. For example, consider the number 0.01001000100001… where an increasing number of zeroes separate the ones. This decimal goes on forever and never falls into a repeating loop, but even the simple numbers “7” and “11” never appear. Mathematicians have not been able to prove that pi is normal. Technically a number could contain all text without every string occurring equally often as required by normality. This weaker condition is called disjunctivity, and we don’t know whether pi is disjunctive either. In fact, any single digit might not appear infinitely many times in pi. For all we know, after the quadrillionth digit, fives could never appear in pi again. Statistical tests of trillions of digits of pi do accord with normality, but testing any finite number of digits will never suffice for a proof.

Mathematicians think that pi, Euler’s number (e), the square root of two, and most of your other favorite irrational numbers are normal. However, proving that for any particular number is remarkably difficult. We only know a few concrete examples, the simplest being the Champernowne constant. To construct that number, you merely concatenate all of the integers in ascending order after a decimal point: .12345678910111213… Silly as the Champernowne constant may be, it was one of the earliest proven examples of a specific number being normal, and the proof is not trivial. Given the strange properties of normal numbers and how few specific examples we know, why do we suspect that pi is normal? Here’s the mundane (but also amazing) part: almost all numbers are normal. If you were to close your eyes and pick a point on the number line at random, the probability you choose a normal number (and hence one that encodes all of Shakespeare) is, well, 100 percent. Typically we think of 100 percent probability as “guaranteed to happen,” but this meaning breaks down when dealing with infinite sets. Of course it’s possible that you happen to pick an integer like 743 or a fraction like ⅘, neither of which is normal, but the density of normal numbers dwarfs such possibilities so thoroughly that it’s appropriate to call the probability 100 percent. We’ll set aside the details, but probability gets weird in the vicinity of infinity.